Consider a numerical valued random phenomenon, with probability function

For the case in which the probability function

The sum written in (2.1) may involve the summation of a countably infinite number of terms and therefore is not always meaningful. For reasons made clear in section 1 of Chapter 8 the expectation

For the case in which the probability function

The integral written in (2.3) is an improper integral and therefore is not always meaningful. Before one can speak of the expectation

A useful tool for determining whether or not the expectation

A discussion of the definition of the expectation in the case in which the probability function must be specified by the distribution function is given in section 6 .

The expectation

A special terminology is introduced to describe the expectation

We call

For a continuous probability law, with probability density function

It may be shown that the mean of a probability law has the following meaning. Suppose one makes a sequence

These successive arithmetic means,

We call

For a continuous probability law, with probability density function

More generally, for any integer

Next, for any real number

The second central moment

The square root

Example 2A . The normal probability law with parameters

Equation (2.12) follows from (2.20) and (2.22) of Chapter 4 and the fact that for any integrable function

From (2.12) and (2.13) it follows that the mean

The operation of taking expectations has certain basic properties with which one may perform various formal manipulations. To begin with, we have the following properties for any constant

Equations (2.15) to (2.19) are immediate consequences of the definition of expectation. We write out the details only for the case in which the expectations are taken with respect to a continuous probability law with probability density function

Equation (2.19) follows from (2.18) , applied first with

We next derive an extremely important expression for the variance of a probability law:

In words, the variance of a probability law is equal to its mean square, minus its square mean . To prove (2.20) , we write, letting

In the remainder of this section we compute the mean and variance of various probability laws. A tabulation of the results obtained is given in Tables 3A and 3B at the end section 3.

Example 2C . The Bernoulli probability law with parameter

Example 2D . The binomial probability law with parameters

Its mean square is given by

To evaluate

Since

Consequently,

Example 2E . The hypergeometric probability law with parameters

Notice that the mean of the hypergeometric probability law is the same as that of the corresponding binomial probability law, whereas the variances differ by a factor that is approximately equal to 1 if the ratio

Example 2F . The uniform probability law over the interval

Note that the variance of the uniform probability law depends only on the length of the interval, whereas the mean is equal to the mid-point of the interval. The higher moments of the uniform probability law are also easily obtained:

Example 2G . The Cauchy probability law with parameters

The mean

However, for

do exist, as one may see by applying theoretical exercise 2.1.

Theoretical Exercises

2.1 . Test for convergence or divergence of infinite series and improper integrals . Prove the following statements. Let

both exist and are finite, then

converge absolutely; if, for some

2.2 . Pareto’s distribution with parameters

Show that Pareto’s distribution possesses a finite

2.3 . “Student’s”

Note that “Student’s”

2.4 . A characterization of the mean . Consider a probability law with finite mean

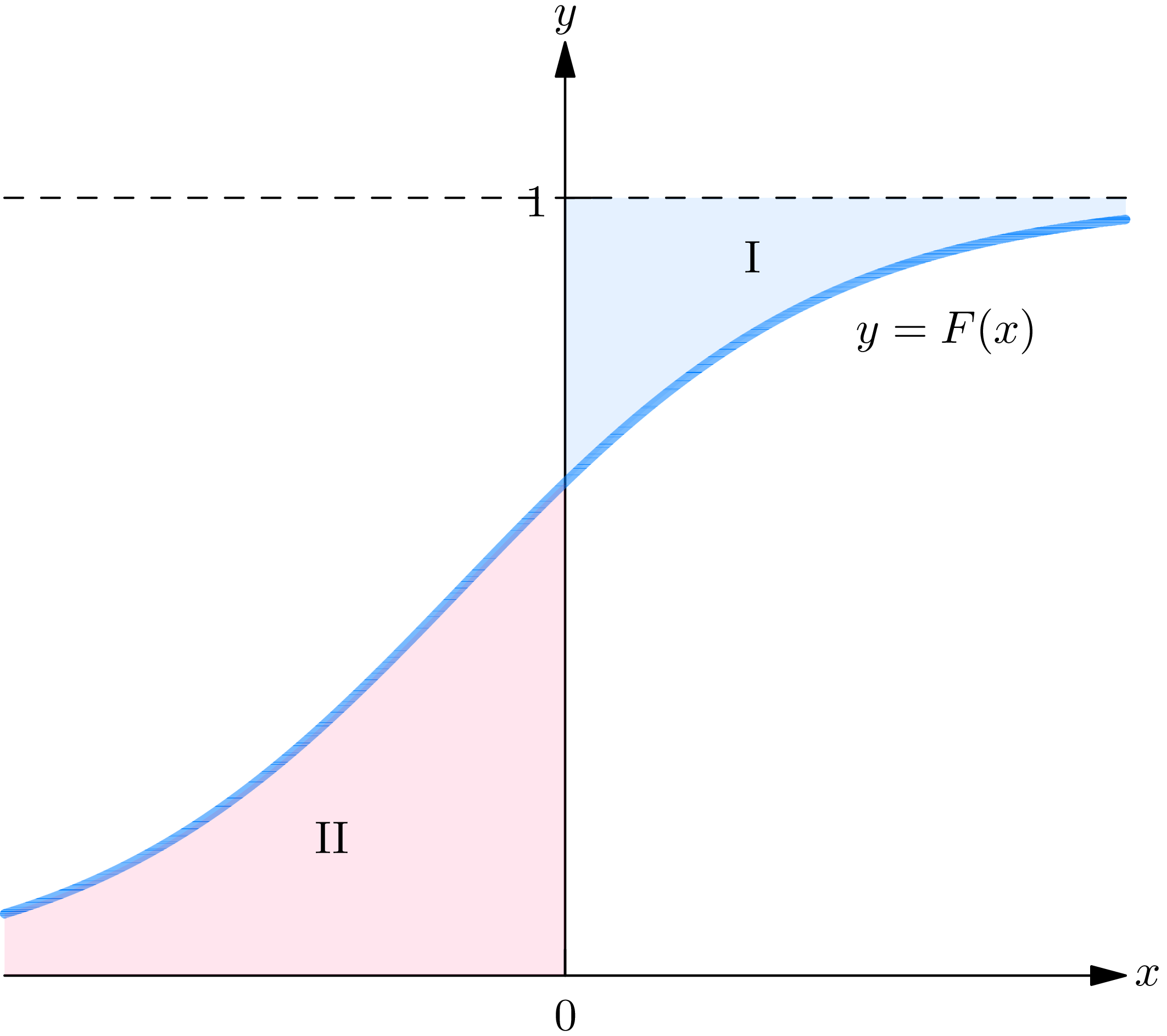

2.5 . A geometrical interpretation of the mean of a probability law . Show that for a continuous probability law with probability density function

Consequently the mean

These equations may be interpreted geometrically. Plot the graph

2.6 . A geometrical interpretation of the higher moments . Show that the

Use (2.41) to interpret the

2.7 . The relation between the moments and central moments of a probability law . Show that from a knowledge of the moments of a probability law one may obtain a knowledge of the central moments, and conversely. In particular, it is useful to have expressions for the first 4 central moments in terms of the moments. Show that

2.8 . The square mean is less than or equal to the mean square . Show that

Give an example of a probability law whose mean square

2.9 . The mean is not necessarily greater than or equal to the variance . The binomial and the Poisson are probability laws having the property that their mean

2.10 . The median of a probability law . The mean of a probability law provides a measure of the “mid-point” of a probability distribution. Another such measure is provided by the median of a probability law , denoted by

If the probability law is continuous, the median

2.11 . The mode of a continuous or discrete probability law . For a continuous probability law with probability density function

2.12 . The interquartile range of a probability law . Possible measures exist of the dispersion of a probability distribution, in addition to the variance, which one may consider (especially if the variance is infinite). The most important of these is the interquartile range of the probability law, defined as follows: for any number

(i) Show that the ratio of the interquartile range to the standard deviation is (a), for the normal probability law with parameters

(ii) Show that the Cauchy probability law specified by the probability density function

Exercises

In exercises 2.1 to 2.7, compute the mean and variance of the probability law specified by the probability density function, probability mass function, or distribution function given.

2.1 .

Answer

Mean (i)

2.2 .

2.3 .

Answer

Mean (i) does not exist, (ii) 0, (iii) 0; variance (i) does not exist, (ii) 3, (iii) 1.

2.4 .

2.5 .

Answer

Mean (i)

2.6 .

2.7 .

Answer

Mean (i)

2.8 . Compute the means and variances of the probability laws obeyed by the numerical valued random phenomena described in exercise 4.1 of Chapter 4.

2.9 . For what values of

Answer

(i)

- For the benefit of the reader acquainted with the theory of Lebesgue integration, let it be remarked that if the integral in (2.3) is defined as an integral in the sense of Lebesgue then the notion of expectation