Table of Contents

- 19.1 INTRODUCTION

- 19.2 LECTURE

- 19.2.1 Finding Optima with Gradients

- 19.2.2 Unveiling Critical Points

- 19.2.3 The Second Derivative Test Steps In

- 19.2.4 Positive and Negative Definite Matrices

- 19.2.5 Unveiling the Role of Positive Definite Hessians

- 19.2.6 Classifying Extrema in Two Dimensions

- 19.2.7 Morse Functions and the Second Derivative Test

- 19.2.8 From Hessian to Gauss Curvature

- 19.2.9 The Morse Lemma

- 19.3 EXAMPLES

- EXERCISES

19.1 INTRODUCTION

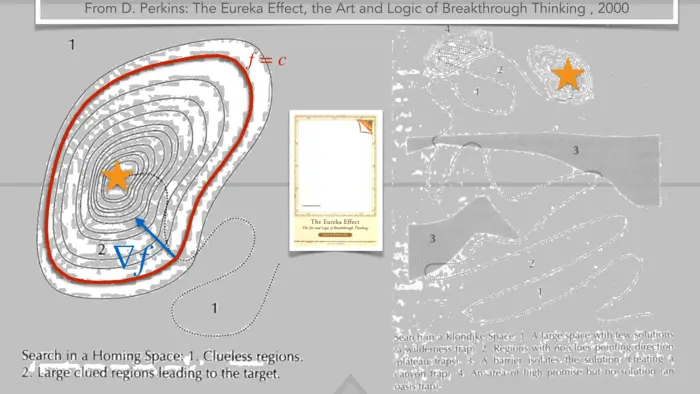

19.1.1 Exploring Learning as an Optimization Process

Learning is an optimization process with the goal to increase knowledge, skills and creative power. This applies both for education as well as for machine learning. In order to track the learning process, we need a function which measures progress. An old fashioned metric is the GPA averaging some grades in an educational system, an other or IQ scores measured by doing tests. An other metric example in a research setting is a social network score like the number of citations or the h-index. For a car driving autonomously it could be the

19.1.2 Will AI Conquer Every Domain?

Once the frame work and the function

19.1.3 Machine Learning’s Advantage in Gradient-Based Optimization

Once a machine knows the function

19.1.4 Using Gradients to Find the Direction of Improvement

Let us first look at the rate of change of a function along a direction

19.2 LECTURE

19.2.1 Finding Optima with Gradients

All functions are assumed here to be in

Theorem 1. If

Proof. We prove this by contradiction. Assume

19.2.2 Unveiling Critical Points

A point

19.2.3 The Second Derivative Test Steps In

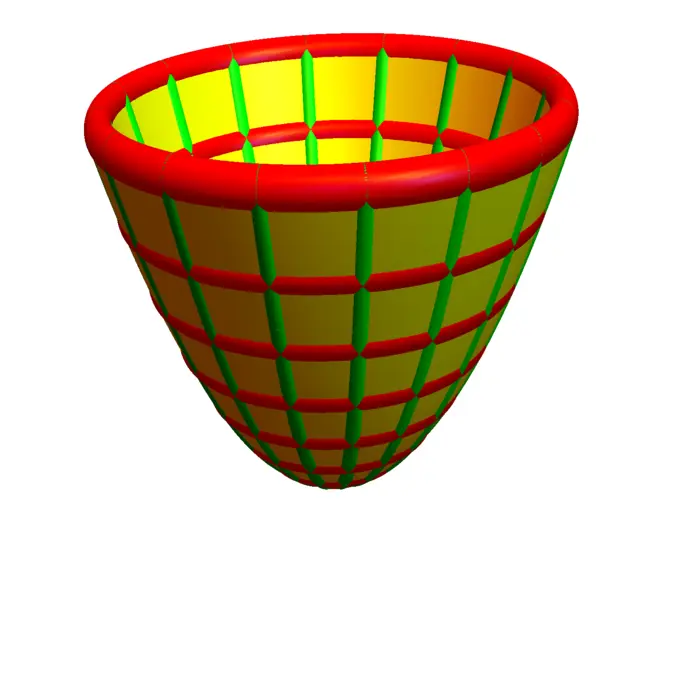

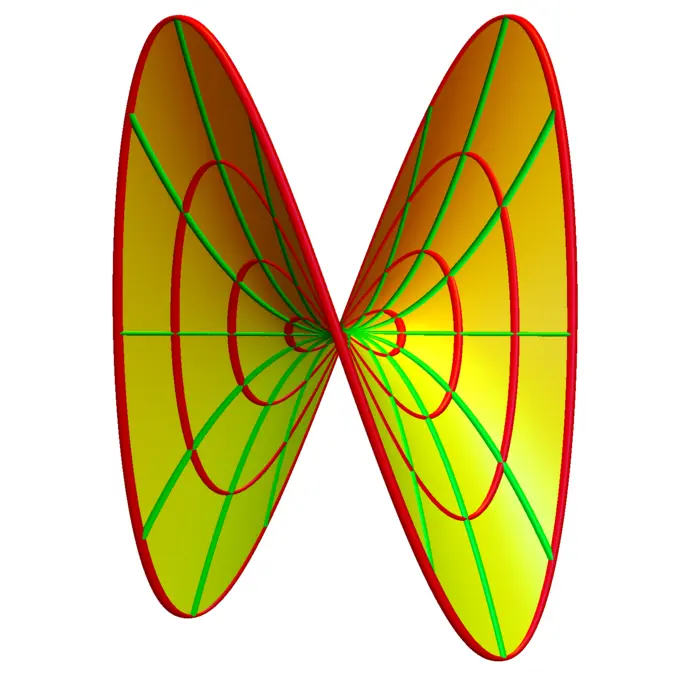

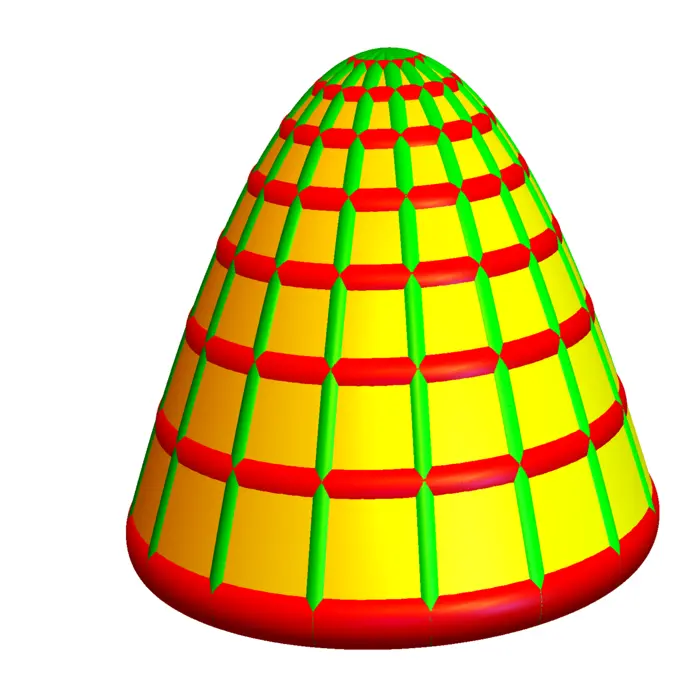

As in one dimension, having a critical point does not assure that a point is a local maximum or minimum. The second derivative test in single variable calculus assures that if

19.2.4 Positive and Negative Definite Matrices

A matrix

19.2.5 Unveiling the Role of Positive Definite Hessians

We say

Theorem 2. Assume

Proof. As

19.2.6 Classifying Extrema in Two Dimensions

Let us look at the case, where

In this two dimensional case, we can classify the critical points if the determinant

19.2.7 Morse Functions and the Second Derivative Test

We say

Theorem 3. Assume

- If

- If

- If

Proof. After translation

19.2.8 From Hessian to Gauss Curvature

One can ask, why

19.2.9 The Morse Lemma

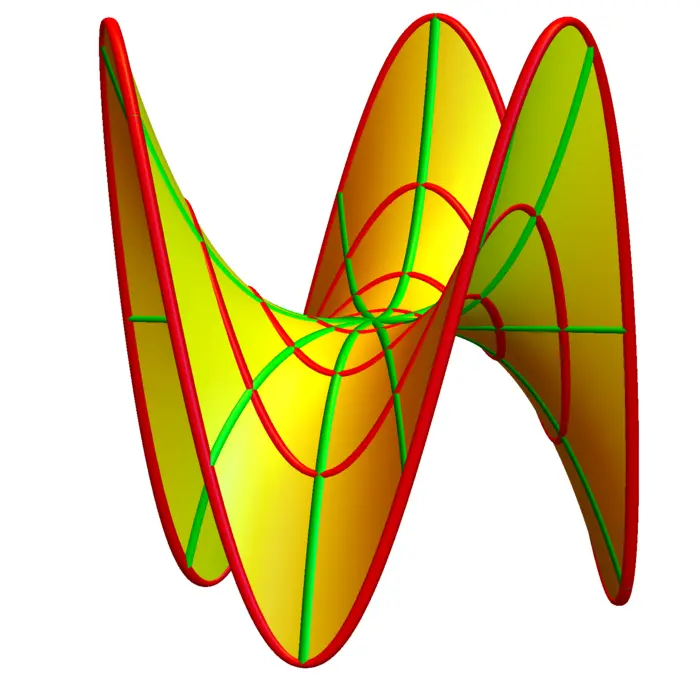

In higher dimensions, the situation is described by the Morse lemma. It tells that near a critical point there is a coordinate change

Theorem 4. Near a Morse critical point

Proof. We use induction with respect to

- Induction foundation: For

- Induction step

◻

19.3 EXAMPLES

Example 1. Q: Classify the critical points of

A: As

EXERCISES

Exercise 1.

- Classify the critical points of the function

- Now do the same for

Exercise 2. Find all critical points of the

P.S. Area

Exercise 3. Where on the parametrized surface

Exercise 4. Find all the critical points of the function

Exercise 5.

- Find a function

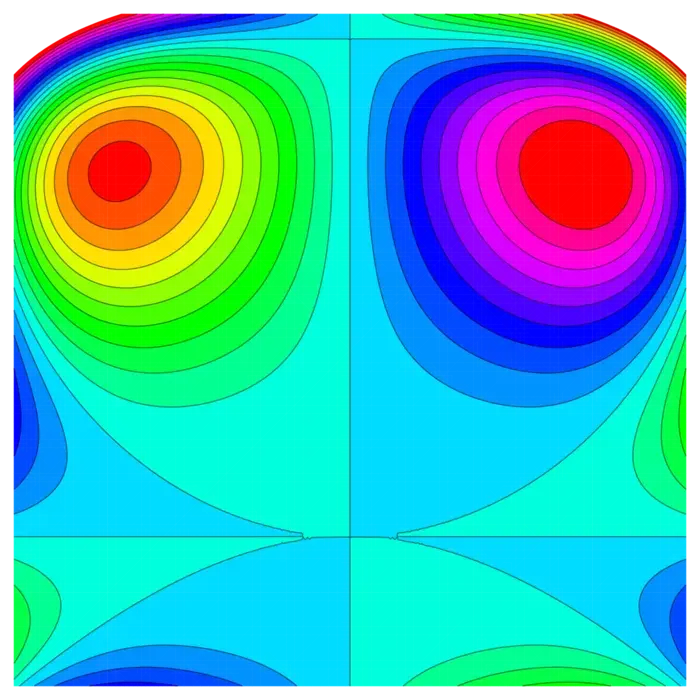

- You see below a contour map of a function of two variables. How many critical points are there? Is the function a Morse function?

- There could be resistance: humans might decide not to cite scientific breakthroughs by machines. On the other hand, who would not want to learn a "theory of everything" even if it is discovered by a machine?↩︎