Table of Contents

20.1 INTRODUCTION

20.1.1 Gradients for Optimal Solutions with Constraints

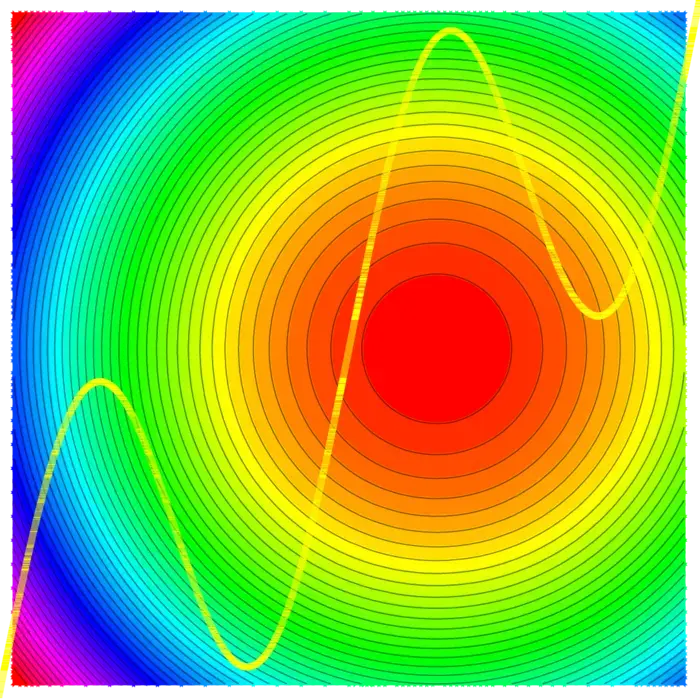

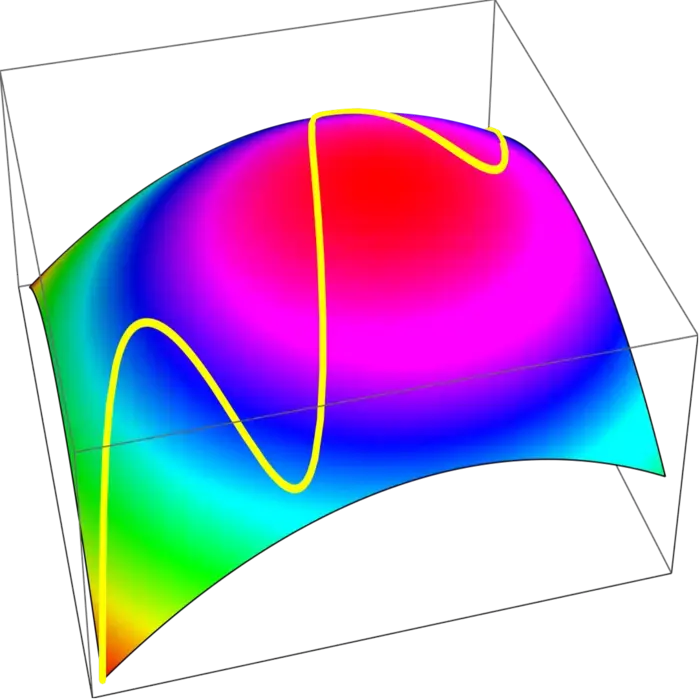

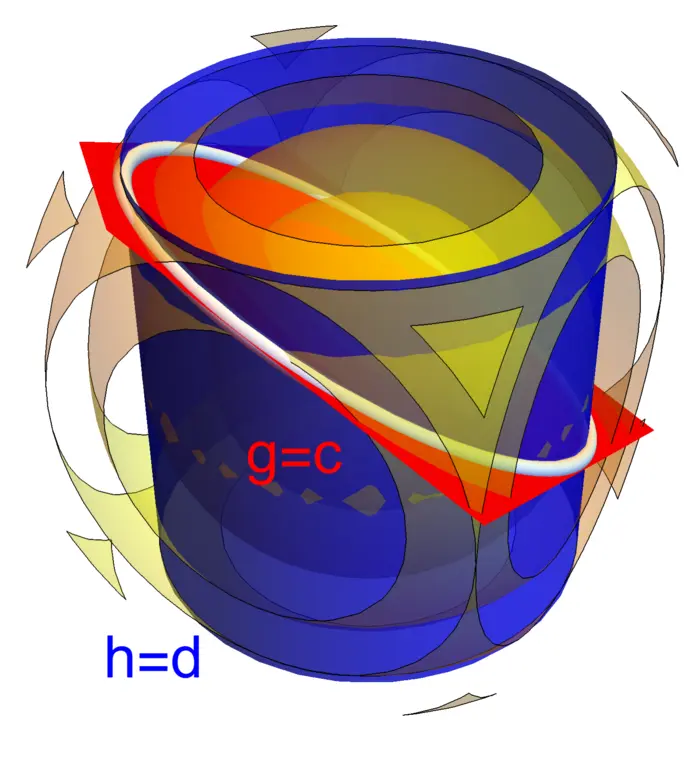

There is rarely a "free lunch". If we want to maximize a quantity, we often have to work with constraints. Obstacles might prevent us to change the parameters arbitrarily. The gradient can still be used as a guiding principle. While we can not achieve

20.1.2 Lagrange’s Magic: Many Constraints, One Solution

The method of Lagrange is much more general. We can work with arbitrary many constraints and still use the same principle. The gradient of

20.2 LECTURE

20.2.1 Finding the Maximum in Confined Spaces

If we want to maximize a function

Theorem 1. If

Proof. By contradiction: assume

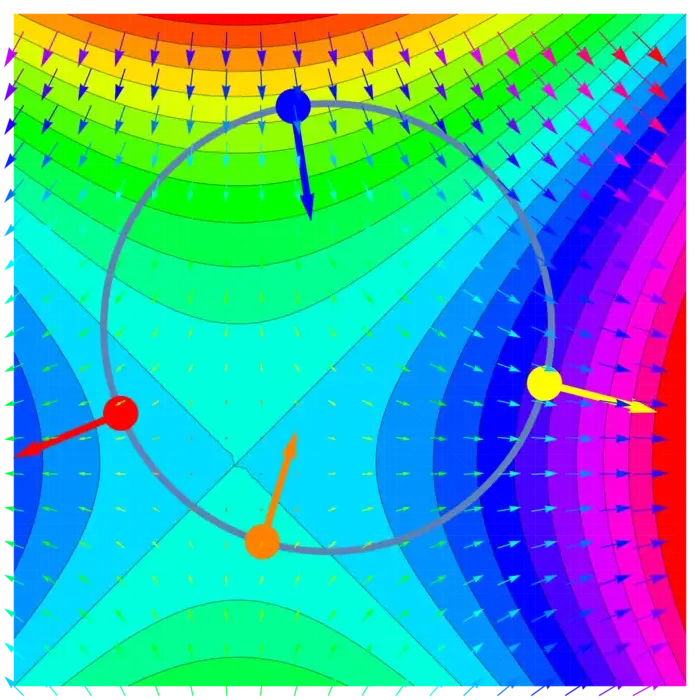

20.2.2 Exploring Lagrange Multipliers and Necessary Conditions

This immediately implies: (distinguish

Theorem 2. For a maximum of

For functions

20.2.3 Finding the True Maximum

To find a maximum, solve the Lagrange equations and add a list of critical points of

Of course, the case of maxima and minima are analog. If

20.2.4 Lagrange’s Climb: Maximizing with Multiple Constraints

The method of Lagrange can maximize functions

For example, if

20.3 EXAMPLES

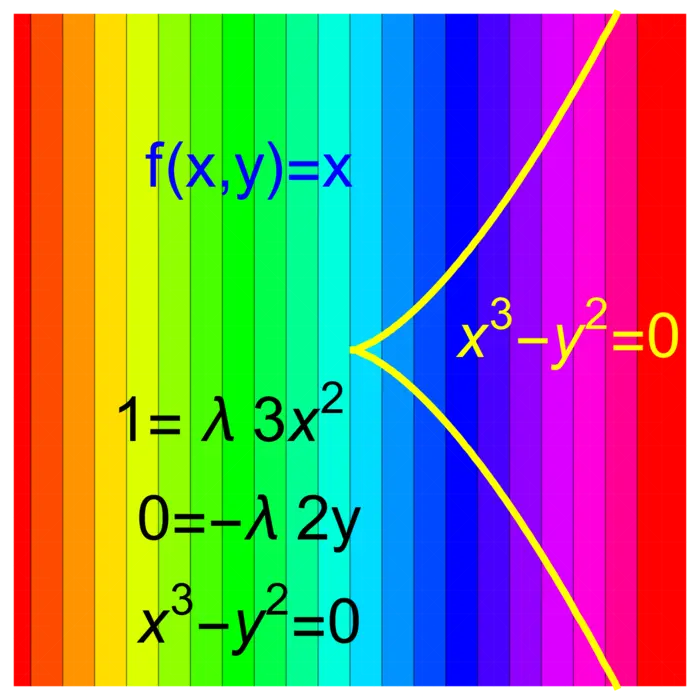

Example 1. Problem: Minimize

Solution: The Lagrange equations are

Example 2. Problem: Which cylindrical soda can of height

Solution: We have

Example 3. Problem: If

Find the distribution

Solution:

Example 4. Assume that the probability that a physical or chemical system is in a state

Solution:

Example 5. If

Example 6. Related to the previous remark is the following observation. It is often possible to reduce the Lagrange problem to a problem without constraint. This is a point of view often taken in economics. Let us look at it in dimension

EXERCISES

Exercise 1. Find the cylindrical basket which is open on the top has has the largest volume for fixed area

Exercise 2. Given a

Exercise 3. Which pyramid of height

Exercise 4. Motivated by the Disney movie "Tangled", we want to build a hot air balloon with a cuboid mesh of dimension

Exercise 5. A solid bullet made of a half sphere and a cylinder has the volume

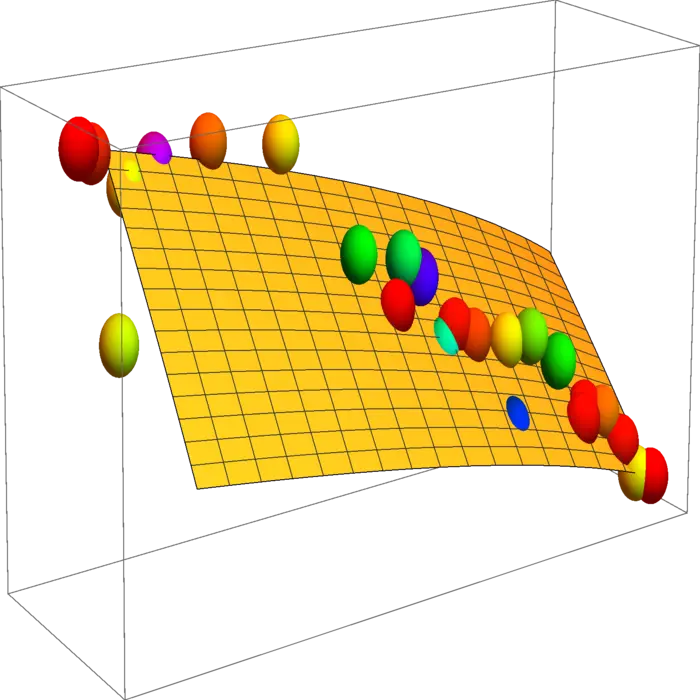

Appendix: Data illustration: Cobb Douglas

20.3.1 Cobb-Douglas: A Formula for Economic Growth

The mathematician and economist Charles W. Cobb at Amherst college and the economist and politician Paul H. Douglas who was also teaching at Amherst, found in 1928 empirically a formula

| Year | |||

|---|---|---|---|

| 1899 | 100 | 100 | 100 |

| 1900 | 107 | 105 | 101 |

| 1901 | 114 | 110 | 112 |

| 1902 | 122 | 118 | 122 |

| 1903 | 131 | 123 | 124 |

| 1904 | 138 | 116 | 122 |

| 1905 | 149 | 125 | 143 |

| 1906 | 163 | 133 | 152 |

| 1907 | 176 | 138 | 151 |

| 1908 | 185 | 121 | 126 |

| 1909 | 198 | 140 | 155 |

| 1910 | 208 | 144 | 159 |

| 1911 | 216 | 145 | 153 |

| 1912 | 226 | 152 | 177 |

| 1913 | 236 | 154 | 184 |

| 1914 | 244 | 149 | 169 |

| 1915 | 266 | 154 | 189 |

| 1916 | 298 | 182 | 225 |

| 1917 | 335 | 196 | 227 |

| 1918 | 366 | 200 | 223 |

| 1919 | 387 | 193 | 218 |

| 1920 | 407 | 193 | 231 |

| 1921 | 417 | 147 | 179 |

| 1922 | 431 | 161 | 240 |

20.3.2 Visualizing Production Limits

Assume that the labor and capital investment are bound by the additional constraint

- This example is from Rufus Bowen, Lecture Notes in Math, 470, 1978↩︎